Keeping Python competitive

I'm trying to find ways to make Python more efficient for many years, see for example my discussion at the Language Summit during Pycon US 2017: Keeping Python competitive (LWN article); slides. At EuroPython 2019 (Basel), I gave the keynote "Python Performance: Past, Present and Future": slides and video. I gave my vision on the Python performance and listed 3 projects to speedup Python that I consider as realistic:

- subinterpreters: see Eric Snow's multi-core-python project

- better C API: see HPy (new C API) and pythoncapi.readthedocs.io

- tracing garbage collector for CPython

This article is about subinterpreters.

Subinterpreters

Eric Snow is working on subinterpreters since 2015, see his first blog post published in September 2016: Solving Multi-Core Python. See Eric Snow's multi-core-python project wiki for the whole history.

In September 2017, he wrote a concrete proposal: PEP 554: Multiple Interpreters in the Stdlib.

Eric mentions the PEP 432: Simplifying the CPython startup sequence as one blocker issue. I fixed this issue (at least for the subinterpreters case) with my PEP 587: Python Initialization Configuration that I implemented in Python 3.8.

Sadly, implementing subinterpreters in the 30 years old CPython project is hard since a lot of code has to be updated. CPython is made of not less than 603K lines of C code (and 815K lines of Python code)!

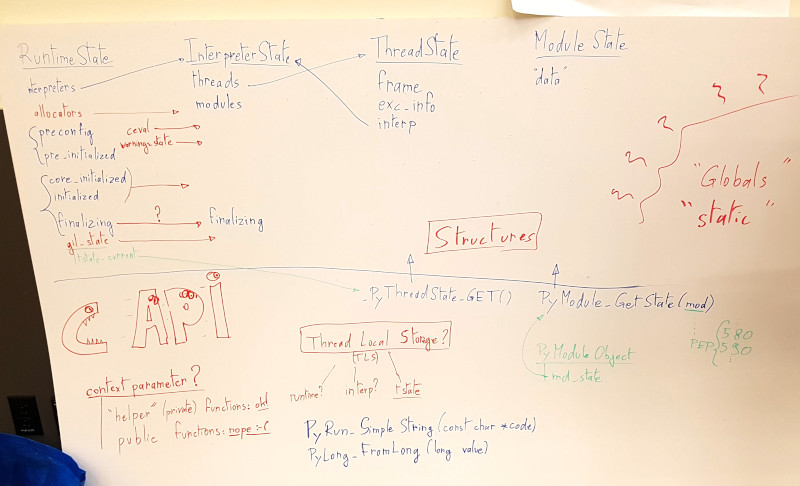

In May 2018, at CPython sprint during Pycon US, I discussed subinterpreters with Eric Snow and Nick Coghlan. I draw an overview of Python internals and the different "states" on a whiteboard:

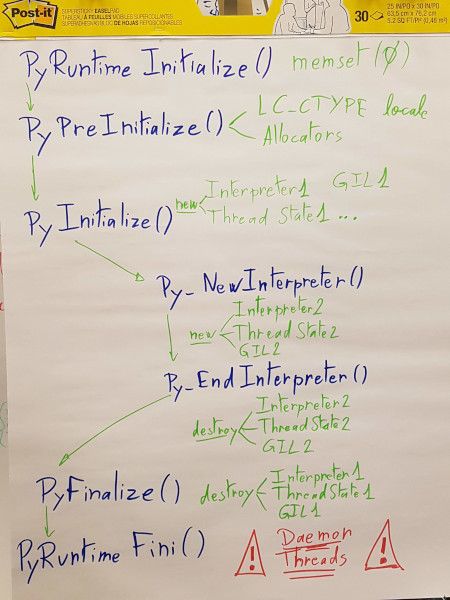

Python and Python subinterpreter lifecycles (creation and finalization):

As a follow-up of this meeting, I wrote down the current state and what should be done: Reorganize Python “runtime”.

Getting the current Python thread state

In the current master branch of Python, getting the current Python thread state is done using these two macros:

#define _PyRuntimeState_GetThreadState(runtime) \

((PyThreadState*)_Py_atomic_load_relaxed(&(runtime)->gilstate.tstate_current))

#define _PyThreadState_GET() _PyRuntimeState_GetThreadState(&_PyRuntime)

These macros depend on the global _PyRuntime variable: instance of the _PyRuntimeState structure. There is exactly one instance of _PyRuntimeState: data shared by all interpreters on purpose (more info about _PyRuntimeState below).

_Py_atomic_load_relaxed() uses an atomic operation which may become an performance issue if Python is modified to get the Python thread state in more places. I tried to check if it uses a slow atomic read instruction, but it seems like only a write uses an explicit memory fence operation: read seems to be "free" (it's a regular efficient MOV instruction). I only checked the x86-64 machine code, it may be different on other architectures.

GIL state

Currently, the _PyRuntimeState structure has a gilstate field which is shared between all subinterpreters. The long term goal of the PEP 554 (subinterpreters) is to have one GIL per subinterpeters to execute multiple interpreters in parallel. Currently, only one interpreter can be executed at the same time: there is no parallelism, except if a thread releases the GIL which is not the common case.

It's tracked by these two issues:

- Make the PyGILState API compatible with multiple interpreters

- Support subinterpreters in the GIL state API

I expect that fixing this issue may require to add a lock somewhere which can hurt performances, depending on how the GIL state is accessed.

Passing a state to internal function calls

To avoid any risk of performance penality with incoming Python internal changes for subinterpreters, but also to make things more explicit, I proposed to pass explicitly "a state" to internal C function calls.

First, it wasn't obvious which "state" should be passed: _PyRuntimeState, PyThreadState, a structure containing both, or something else?

Moreover, it was unclear how to get the runtime from PyThreadState, and how to get PyThreadState from runtime?

I started to pass runtime to some functions (_PyRuntimeState): Pass _PyRuntimeState as an argument rather than using the _PyRuntime global variable.

Then I pushed more changes to pass tstate to some other functions (PyThreadState): Pass explicitly tstate to function calls.

I added PyInterpreterState.runtime so getting _PyRuntimeState from PyThreadState is now done using: tstate->interp->runtime. It's no longer needed to pass runtime and tstate to internal functions: tstate is enough.

Slowly, I modified the internals to only pass tstate to internal functions: tstate should become the root object to access all Python states.

I ended with a thread on the python-dev mailing list to summarize this work: Pass the Python thread state to internal C functions. The feedback was quite positive, most core developers agreed that passing explicitly tstate is a good practice and the work should be continued.

_PyRuntimeState and PyInterpreterState

Currently, some _PyRuntimeState fields are shared by all interperters, whereas they should be moved into PyInterpreterState: it's still a work in progress.

For example, I continued the work started by Eric Snow to move the garbage collector state from _PyRuntimeState to PyInterpreterState: GC operates out of global runtime state..

As explained above, another example is gilstate that should also be moved to PyInterpreterState, but that's a complex change that should be well prepared to not break anything.

More subinterpreter work

Implementing subinterpreters also requires to cleanup various parts of Python internals.

For example, I modified Python so Py_NewInterpreter() and Py_EndInterpreter() (create and finalize a subinterpreter) share more code with Py_Initialize() and Py_Finalize() (create and finalize the main interpreter): new_interpreter() should reuse more Py_InitializeFromConfig() code.

They are still many issues to be fixed: it's moving slowly but steadily!